Release LLM Integration Container Plugin

The LLM Integration plugin brings AI-powered automation to Digital.ai Release. With it, you can connect to any Large Language Model (LLM) provider, call tools on any MCP (Model Context Protocol) server, and build autonomous AI agents that combine reasoning and tool execution — all from within your release workflows, without writing integration code.

This plugin is in beta and provided for evaluation purposes only. It is not recommended for use in production environments.

When configuring connections to LLM providers and MCP servers, use API tokens with the minimum permissions required. Avoid overly permissive tokens, as they can allow unintended actions by AI agents. Regularly rotate credentials and review access as needed.

What you can do

- Call any MCP server tool without writing an integration plugin — connect to GitHub, Jira, Agility, or your own custom MCP server and invoke its operations directly from a release task.

- Prompt any LLM and use the response as a variable in subsequent tasks — summarize output, classify status, extract data, or generate content as part of a pipeline.

- Run AI agents that autonomously plan and execute multi-step workflows, deciding which MCP tools to call based on their goal.

- Start interactive chats with an LLM from within a running release task — useful for approvals, troubleshooting, or human-in-the-loop decision making.

- Mix and match MCP servers and LLM providers per task to get the right tool for each job.

Prerequisites

Before you begin, ensure that you have the following:

- The LLM Integration plugin installed in Release. See Install the LLM Integration plugin.

- A Digital.ai Release Runner configured to run container-based tasks.

- Access to an LLM service (Google Gemini, OpenAI, Digital.ai LLM, or any OpenAI-compatible endpoint).

- An MCP server endpoint (optional; required only for MCP and Agent tasks).

Install the LLM Integration Plugin

Install from the Plugin Gallery (recommended)

- In Release, navigate to

> Manage Plugins > Plugin Gallery.

- Search for LLM Integration.

- Click Install next to the plugin.

Install manually from GitHub

If you prefer to download and upload the zip yourself:

- Go to Release LLM Integration Releases.

- Click Assets and download the latest plugin zip file.

- In Release, navigate to

> Manage Plugins > Installed Plugins.

- Click Upload and select the downloaded zip file.

Try it out with the Dev Environment

The plugin repository includes a ready-to-run Docker Compose environment that starts a full local Release stack — including Release itself, a Remote Runner, a Release MCP server, and local AI models. It is the fastest way to explore the plugin.

What the dev environment includes

| Service | Description |

|---|---|

digitalai-release | Release server at http://localhost:5516, with SmolLM2 and Llama 3.2 bundled as local AI models |

digitalai-release-remote-runner | Runner that executes container tasks |

digitalai-release-mcp | MCP server that exposes Release operations (list releases, folders, templates, etc.) as tools |

container-registry | Local Docker registry for plugin images at localhost:5050 |

container-registry-ui | Registry browser at http://localhost:8086 |

Start the environment

Prerequisites

-

Docker Desktop (or Docker Engine with Compose v2)

-

Add the following entries to your

/etc/hosts(orC:\Windows\System32\drivers\etc\hosts):127.0.0.1 host.docker.internal

127.0.0.1 container-registry

Start all services

docker compose -f dev-environment/docker-compose.yaml up -d --build

Wait until the digitalai-release-setup container has exited — that is the signal that Release is ready. Log in with admin/admin at http://localhost:5516.

Stop all services

docker compose -f dev-environment/docker-compose.yaml down

Build and publish the plugin

The build.sh (or build.bat on Windows) script builds the plugin container image, pushes it to the local registry, and installs the plugin into the running Release instance.

macOS / Linux

sh build.sh --upload

Windows

build.bat --upload

You can re-run this command after code changes; the new version will be picked up without restarting Release.

Load the demo templates

The repository includes a set of example templates that demonstrate each task type, as well as pre-configured MCP server connections and AI model connections.

Add your API keys

Copy the example secrets file and fill in your credentials:

cp setup/secrets.xlvals.example setup/secrets.xlvals

Edit setup/secrets.xlvals and supply the keys you want to use:

| Key | Description |

|---|---|

GEMINI_API_KEY | Google Gemini API key |

OPENAI_API_KEY | OpenAI API key |

DAI_LLM_API_KEY | Digital.ai LLM Service API key |

GITHUB_TOKEN | GitHub token (for GitHub MCP examples) |

AGILITY_KEY | Agility bearer token (for Agility MCP examples) |

You only need the keys for the providers you want to test. The local AI models (SmolLM2, Llama 3.2) work without any key.

Upload the templates

./xlw apply -f setup/mcp-demo.yaml

The templates are uploaded to a new AI Demo folder. Log in, open the folder, and run any of the example templates.

Demo templates

The setup/mcp-demo.yaml file provisions seven ready-to-run templates in the AI Demo folder, plus pre-configured connections for all MCP servers and AI models.

| Template | What it demonstrates |

|---|---|

| 1. MCP examples | Direct MCP tool calls — list Release folders, read a GitHub issue — with no LLM involved |

| 2. Prompt examples | The same prompt sent to five different models in parallel so you can compare results |

| 3. MCP + Prompt examples | Piping MCP output into an LLM prompt to process it further (extract a name, write a summary, gate on the result) |

| 4. Agent examples | Autonomous agents using one or two MCP servers to complete multi-step goals |

| 5. Interactive Chat example | A live chat session with an LLM embedded inside a running task |

| 6. Adaptive Orchestration example | A failure handler that injects an AI Agent task dynamically to diagnose and remediate the failure |

| LLM Shoot-out | Three models summarize the same text; a fourth model acts as a judge and ranks them |

Available Tasks

MCP Tasks

AI Tasks

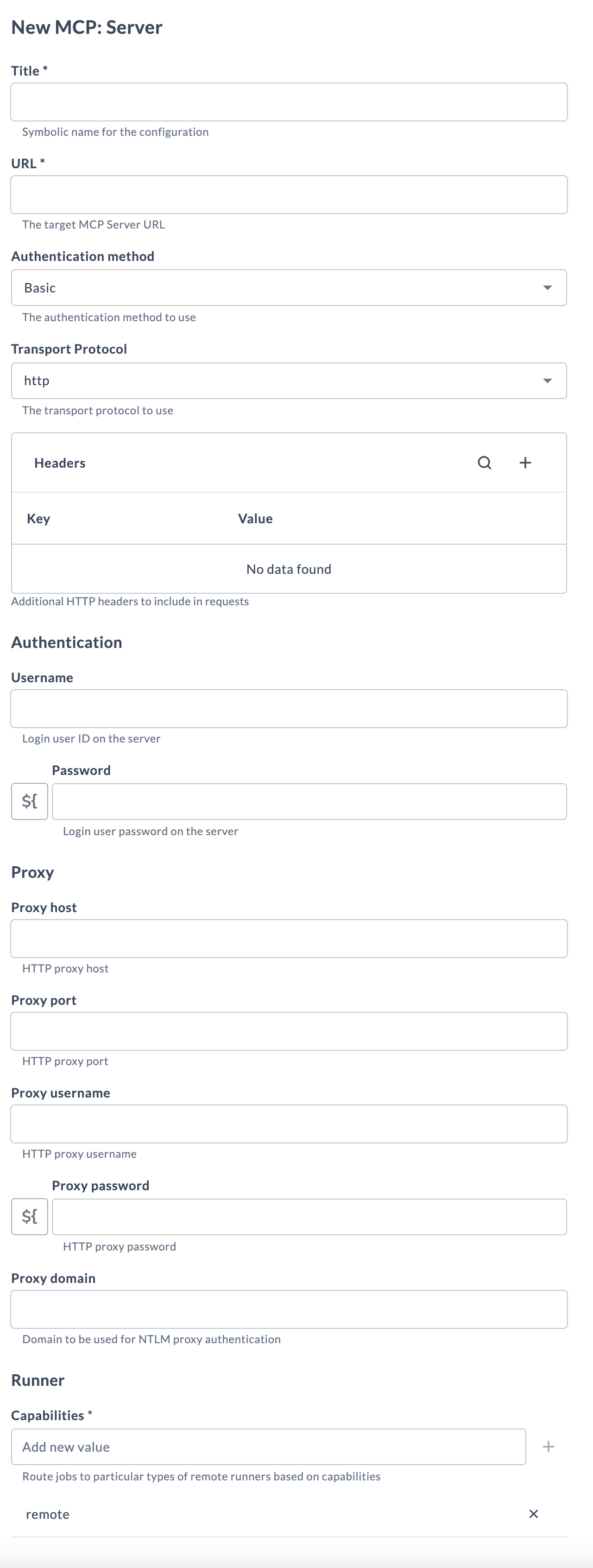

Set up a Connection to an MCP Server

-

From the navigation pane, under CONFIGURATION, click Connections.

-

Under HTTP Server connections, next to MCP Server, click

.

-

Fill in the following fields:

Field Description Title Name for the MCP server connection. URL The URL of your MCP server endpoint. Authentication method None,Basic,Bearer, orPAT.Transport Protocol http(Streamable HTTP, the MCP default) orsse(Server-Sent Events).Headers (Optional) Custom HTTP headers, for example custom bearer tokens passed as header values. Username Username for Basic authentication. Password Password or token for Bearer / Basic / PAT authentication. Capabilities Capability label that routes this task to the correct Runner. -

Click Test to verify the connection, then Save.

Some MCP servers require proprietary headers rather than a standard Authorization header. Use the Headers field for these — for example, the Agility MCP server uses X-Agility-Bearer and X-Agility-Host.

Set up a Connection to an LLM Provider

The plugin supports three provider types. Gemini does not have an API URL field; the others do.

-

From the navigation pane, under CONFIGURATION, click Connections.

-

Under AI Model connections, next to OpenAI, Gemini, or Digital.ai LLM, click

.

-

Fill in the following fields:

Field Gemini OpenAI Digital.ai LLM Title ✓ ✓ ✓ API URL — ✓ (default: https://api.openai.com/v1)✓ (default: https://api.staging.digital.ai/llm)API Key ✓ ✓ ✓ Model ✓ (default: gemini-2.5-flash)✓ ✓ -

Click Test, then Save.

The OpenAI connection type works with any OpenAI-compatible endpoint, including locally hosted models via Docker Model Runner. Point the API URL at http://model-runner.docker.internal/engines/v1 and set the model name accordingly (for example, smollm2 or llama3.2). No API key is required when using local models.

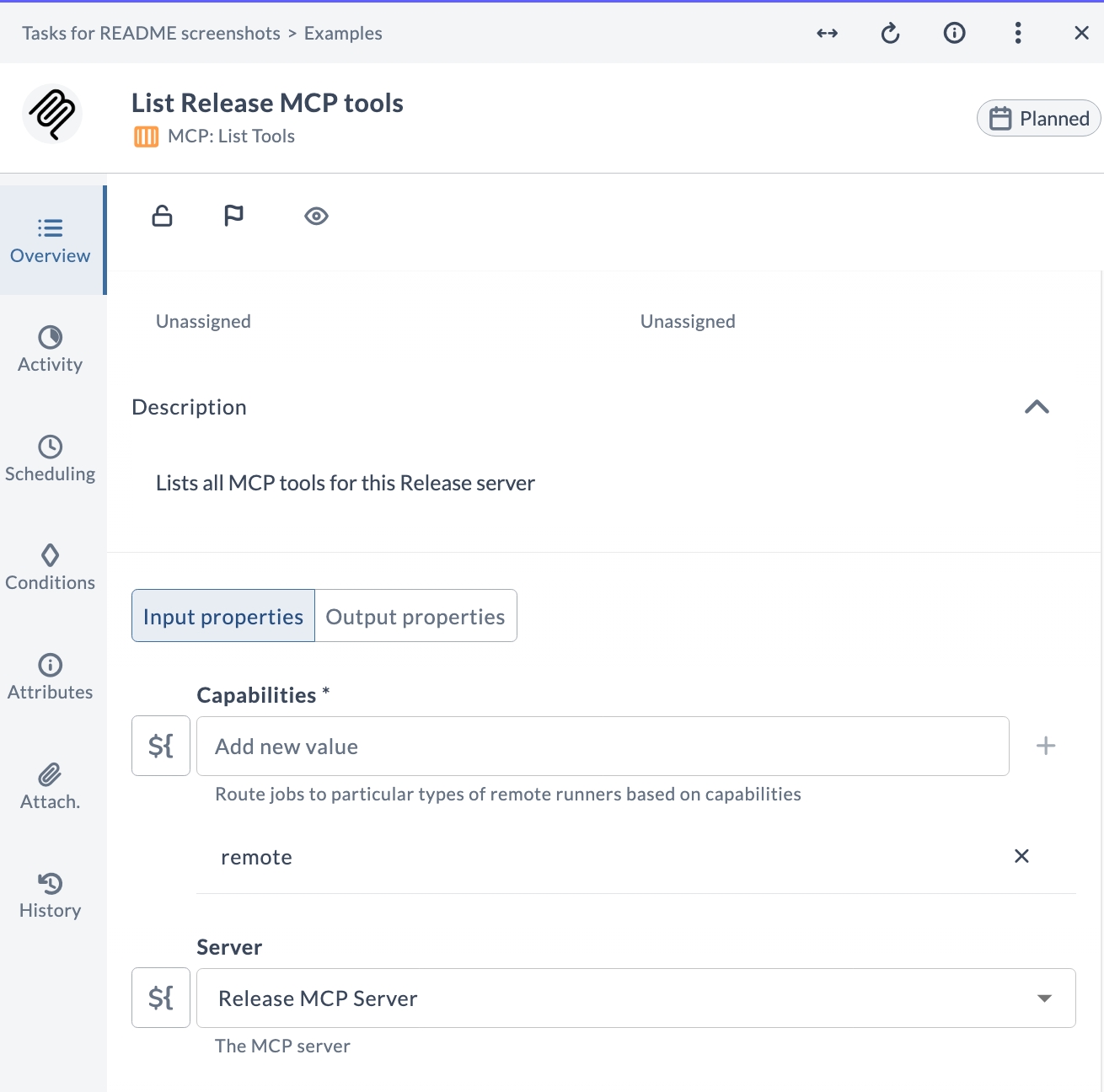

MCP: List Tools

The MCP: List Tools task queries an MCP server and returns all the tools it exposes, including their descriptions and input schemas. Use this task to discover what is available on a server before calling specific tools.

Task fields

| Field | Description |

|---|---|

| Capabilities | Routes the task to a runner with matching capabilities. |

| Server | The MCP server connection to query. |

Output properties

| Property | Description |

|---|---|

tools | Map of tool name → description. |

inputSchema | Map of tool name → JSON input schema. |

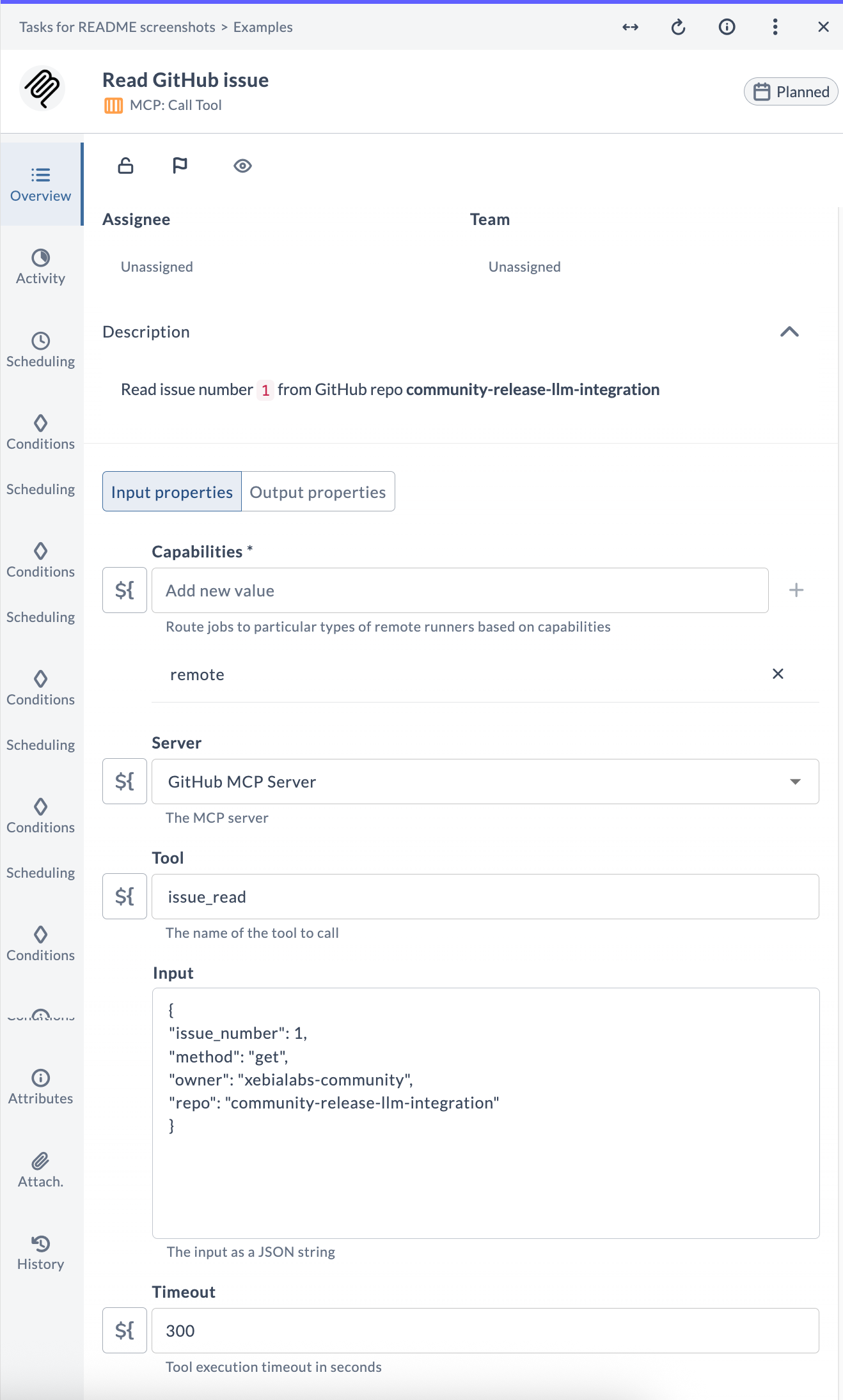

MCP: Call Tool

The MCP: Call Tool task executes a named tool on an MCP server and stores the output. No LLM is involved — it is a direct, deterministic invocation. Use it wherever you would ordinarily write an integration plugin.

Task fields

| Field | Description |

|---|---|

| Capabilities | Routes the task to a runner with matching capabilities. |

| Server | The MCP server connection to use. |

| Tool | The tool name to call. An interactive dropdown lists available tools from the selected server. |

| Input | Tool arguments as a JSON string. Leave empty if the tool takes no input. |

| Timeout | Maximum execution time in seconds (default: 300). |

Output properties

| Property | Description |

|---|---|

result | The raw text output returned by the tool. |

Example — read a GitHub issue

{

"issue_number": 1,

"method": "get",

"owner": "xebialabs-community",

"repo": "community-release-llm-integration"

}

Example — list failed releases from the Release MCP server

{

"request": {

"status": "FAILED"

}

}

AI: Prompt

The AI: Prompt task sends a single prompt to an LLM and stores the response. Use it to process or transform text that came from an earlier step — summarize, classify, extract data, translate, or generate content.

Task fields

| Field | Description |

|---|---|

| Capabilities | Routes the task to a runner with matching capabilities. |

| Prompt | The text to send to the LLM. Supports Release variables using ${variable} syntax. |

| Model | The AI model connection to use. |

Output properties

| Property | Description |

|---|---|

response | The full LLM response text. |

Example pattern — MCP output → Prompt

A common pattern is to pipe the output of an MCP: Call Tool task into a Prompt task to make it human-readable.

Run an MCP: Call Tool task first to store JSON output in ${releases}. Then use an AI: Prompt task with a prompt like:

Make a summary of the failed releases: ${releases}

The generated summary is available in the task output property response.

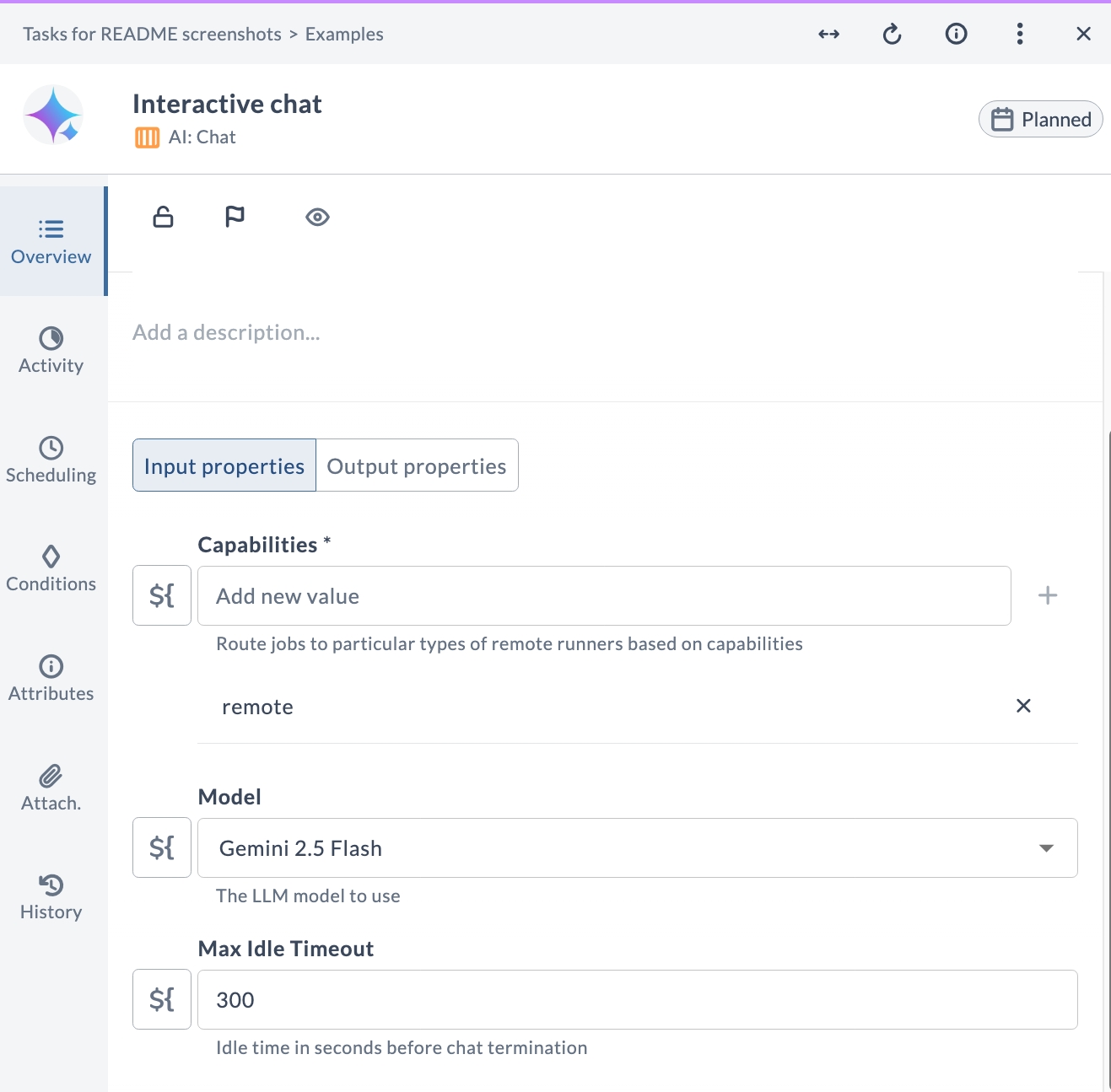

AI: Chat

The AI: Chat task starts a live conversational session with an LLM, hosted inside the task's Activity section. The session persists until the user sends Stop chat or the idle timeout expires.

Task fields

| Field | Description |

|---|---|

| Capabilities | Routes the task to a runner with matching capabilities. |

| Model | The LLM model to use. |

| Max Idle Timeout | Seconds of inactivity before the session ends automatically (default: 300). |

Output properties

| Property | Description |

|---|---|

response | The last LLM message in the conversation. |

Each new comment you post in the task's Activity section is forwarded to the LLM as your next message. The model maintains the full conversation history for the duration of the session. Post Stop chat to end the session gracefully.

Typical use cases

- Collaborative troubleshooting — ask the model questions about a failed deployment while the release is paused.

- Human-in-the-loop decision making — have the model explain options and let an operator choose the next step.

- On-the-fly data analysis — paste log excerpts or JSON into the chat and ask the model to interpret them.

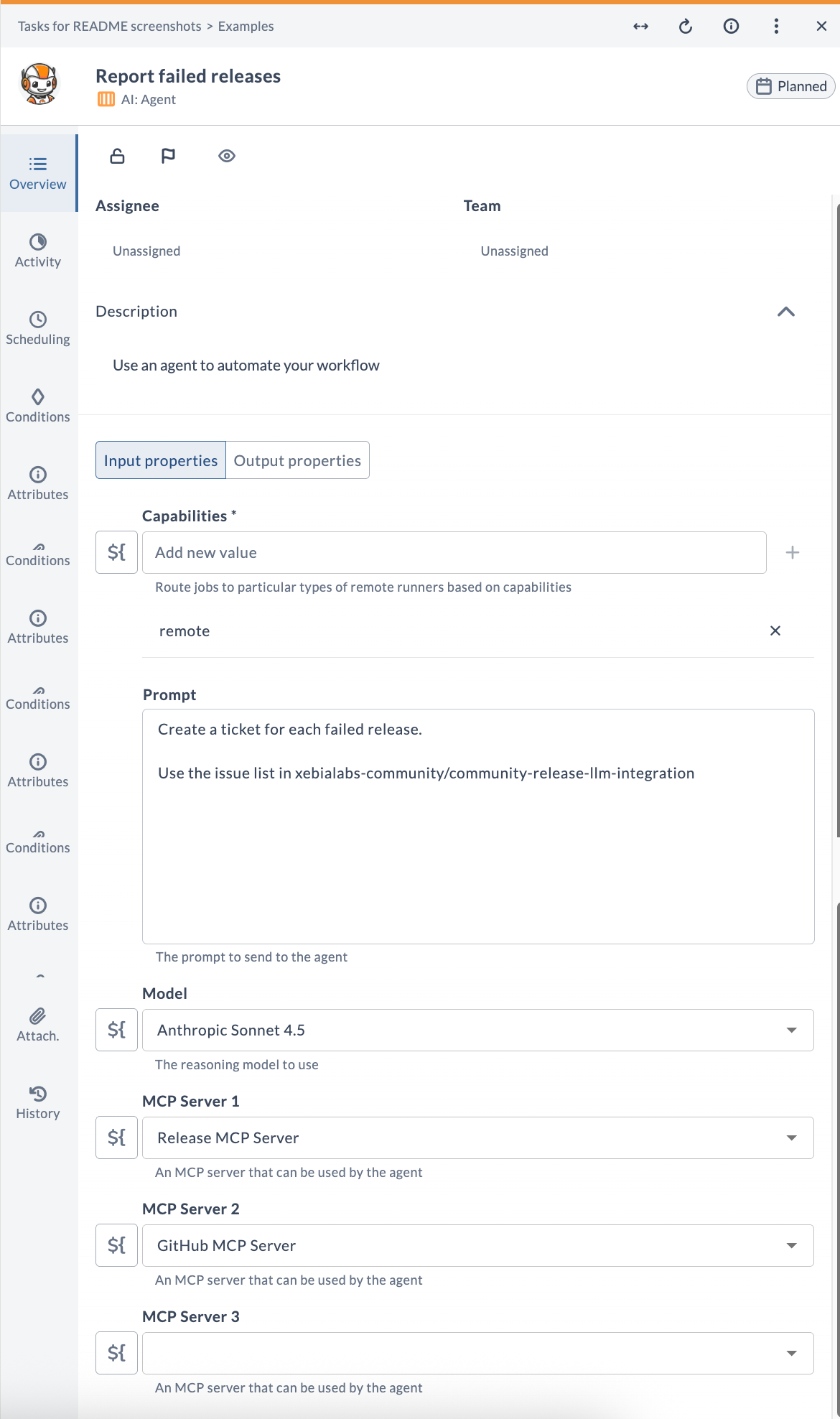

AI: Agent

The AI: Agent task gives an LLM access to one or more MCP servers and lets it autonomously plan and execute multi-step workflows to accomplish a goal described in natural language. The agent decides which tools to call, in what order, and what to pass as input — you only need to provide the goal.

Task fields

| Field | Description |

|---|---|

| Capabilities | Routes the task to a runner with matching capabilities. |

| Prompt | A natural-language description of the goal. |

| Model | The reasoning model to use. Prefer larger, more capable models for complex goals. |

| MCP Server 1 | Primary MCP server the agent can use. |

| MCP Server 2 | Additional MCP server (optional). |

| MCP Server 3 | Additional MCP server (optional). |

Output properties

| Property | Description |

|---|---|

result | The agent's final response once the goal is completed. |

How it works

The agent receives your prompt together with a list of all tools available on the configured MCP servers. It iteratively calls tools, processes their output, and continues until it determines the goal is complete. Each step is recorded in a markdown report in the task's Activity section, so you can trace exactly what the agent did.

If the agent requires information it cannot obtain from the available tools, it ends its response with 🙋🏻 and the task is failed — prompting a human to re-run it with a clearer prompt or additional MCP access.

Example prompts

Create a nice summary for the currently logged-in user.(requires a GitHub or identity MCP server)Analyze all templates in the "AI Demo" folder and report on tasks that are duplicated across templates.(requires Release MCP server)List all releases in Digital.ai Release. For the last release in FAILED state, create a GitHub issue in owner/repo to fix it. Mention Release title, date, and cause in the ticket.(requires both Release MCP and GitHub MCP servers)

Advanced: Adaptive Orchestration with Failure Handlers

A powerful pattern enabled by this plugin is adaptive orchestration: using a Release failure handler script to inject an AI Agent task dynamically into a running release when something goes wrong. The agent then diagnoses the failure and creates a targeted remediation plan, all without human intervention at the automation layer.

The demo template 6. Adaptive Orchestration example ships with a full working example of this pattern:

- A simulated MCP task fails (it calls a non-existent tool).

- The task's failure handler script runs, creating a new

community-llm.LlmAgenttask programmatically and inserting it into the current phase. - The agent receives the failed task's ID and a structured prompt that instructs it to: retrieve the failure details via MCP, classify the failure, and create an "Adaptive Recovery" phase with human-approved remediation tasks.

- The original failed task is skipped, and the release continues with the agent as the next step.

This pattern can be adapted for real scenarios: failed deployments, infrastructure provisioning errors, or any situation where the corrective action depends on the exact failure details.

Best Practices

- Write specific prompts. Vague prompts produce vague results, especially for Agent tasks. Describe the goal, the expected output format, and any constraints (for example, do not modify production).

- Choose the right model for the task. Small local models (SmolLM2, Llama 3.2) are fast and free but less capable. Use a frontier model (Gemini Pro, GPT-4, Sonnet) for Agent tasks with complex reasoning requirements.

- Use Release variables to inject context. Reference

${variable}in prompts to include dynamic values such as environment names, previous task output, or release titles. - Store sensitive values as encrypted variables. Never hardcode tokens or passwords in a prompt. Use Release's encrypted variable type and reference them via

${variable}. - Set timeouts on MCP: Call Tool tasks. Some tools may be slow or hang. Set a conservative timeout to prevent tasks from blocking a release indefinitely.

- Add error-handling tasks after Agent tasks. Agent output is non-deterministic. Place a Gate or manual review task after critical agent steps so a human can verify the result before the release proceeds.

- Monitor LLM API usage. Agent tasks can make many tool and LLM calls in a single execution. Monitor API usage to avoid unexpected cost spikes.

Community Support

This is a community-supported plugin. For issues, questions, or contributions:

- GitHub Repository: community-release-llm-integration

- Report Issues: GitHub Issues